Incremental attribution: How to measure the actual advertising success of your Meta Ads

Written by

Published on

Last modified on

Max-Raphael Feibel

December 16, 2025

February 18, 2026

The fundamental problem: correlation vs. causality

Digital advertising platforms provide impressive dashboards. Clicks, impressions, conversions, ROAS. Everything is measurable, everything is traceable. At least at first glance.

The problem runs deeper: when eBay paused its Brand Search Ads, a large-scale field experiment showed that sales remained largely unchanged. These ads captured existing demand rather than generating new growth.

This insight is uncomfortable, but fundamental: a significant portion of the conversions that advertising platforms claim for themselves would have occurred even without the respective ad.

How standard attribution works

Traditional attribution models operate with rules: if someone sees or clicks on an ad and makes a purchase within a defined time frame, the conversion is attributed to the ad. This is technically correct, but logically problematic.

An example: A person is planning to make a purchase from you. On their way to entering the URL directly, they happen to see one of your ads in the feed. They don't click on it, enter the URL directly, and make the purchase. Standard attribution assigns this conversion to the ad. Even though the person would have made the purchase anyway. The ad was present, but not decisive for the purchase.

This confusion between correlation (ad was seen → purchase made) and causality (purchase made because ad was seen) pervades all rule-based attribution models.

Multi-channel exacerbates the problem

In reality, no channel operates in isolation. A typical scenario: A person sees your meta ad but does not click on it. Later, they search for your product on Google, click on a Google Ads ad, and make a purchase.

Both platforms claim conversion: Google Ads according to last-click logic, Meta according to view-through logic. Both measurements are technically correct. The question of which channel actually influenced the purchase decision or whether the customer would have bought anyway remains unanswered.

Incrementality quantifies the causal lift generated by marketing. It shows what has changed because your campaign existed. It reveals waste: you can see where ads are merely capturing organic demand. It informs budget decisions: you learn which channels actually generate new revenue and which ones are just taking credit for themselves.

What is incrementality and why is it the gold standard?

Incremental sales represent the increase in revenue generated by your advertising. Experiments (test vs. control, exposed vs. unexposed) are the only way to infer the causal effect of media on sales. Just as randomized controlled trials are the only way to measure the effectiveness of a new drug.

The principle is simple:

- Test group: People or regions who see your ads

- Control group: Comparable individuals or regions without exposure to advertising

- Lift: The difference in results between the two groups

If your test group generates 1,250 purchases and your control group generates 1,000, your campaign has generated +250 incremental sales (+25% lift). The part that wouldn't have happened without you.

Methodologies based purely on observational models, such as media mix modeling (MMM) and multi-touch attribution (MTA), are useful in many cases, but they measure the correlative influence of media on sales. Correlation is not necessarily an indicator of causality.

The three main methods for measuring incrementality

Marketers have various options for measuring incrementality, each with its own methodology and optimal use cases. The three most common approaches are:

Geo-holdout tests

Geo-holdout tests divide geographic regions into test and control groups. Ads are served in one location, while another comparable market remains ad-free. By comparing performance, marketers attempt to measure the actual impact of the campaign.

The geo-split method identifies specific markets within a broader region that are statistically representative of that broader region and groups these markets into a test cell for experiments.

Practical example: Brand A wants to know the impact of its Facebook prospecting campaign on sales.

Structure: Select five states that are statistically representative of the overall business but have relatively low volume.

Implementation: Facebook Prospecting will be paused for 30 days in only these five states. Result: Compare the transaction volume in these five states with the rest of the country (control group). The amount of "lost" sales when Facebook Prospecting is removed is considered Facebook Prospecting's "contribution" to the business.

Advantages:

- Works across platforms

- Measures overall impact, including cross-channel effects

- No dependence on cookies or user-level tracking

Disadvantages:

- Requires sufficiently large, comparable markets

- Regional differences can distort results

- Not suitable for all channels (e.g., influencer marketing without geo-targeting)

User-level holdout tests (platform lift studies)

Many advertising platforms such as Facebook and Google allow marketers to create exposed and control groups within their ecosystems. Part of the target audience receives ads, while a similar group is denied the ads. The results are only valid within the platform conducting the test.

Meta offers conversion lift studies, Google offers conversion lift. Both platforms randomly divide users into test and control groups and compare their purchasing behavior.

Advantages:

- High precision through user-level randomization

- Available directly on the platform

- Good granularity for campaign-specific insights

Disadvantages:

- Only measures within its own platform

- Platform has vested interest in positive results

- Reduces range during testing

Time-based holdout tests

Instead of splitting a target group, the time-based holdout approach pauses campaigns for a defined period of time and then restarts them to measure the difference in conversion rates.

Advantages:

- Easy to implement

- No geographical division required

Disadvantages:

- Seasonal effects can distort results

- Loss of momentum during the break

- Difficult to control external factors

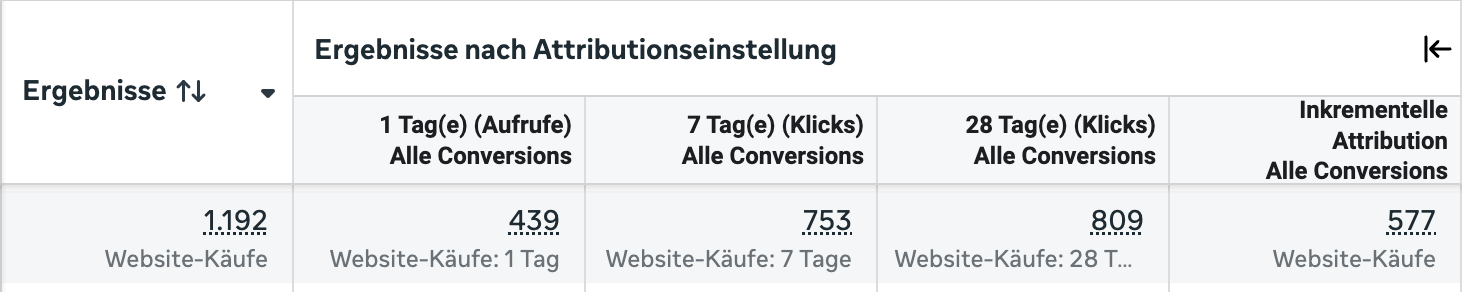

Meta's incremental attribution in detail

Since the end of 2024, Meta has introduced an automated version of incrementality measurement based on years of data from conversion lift studies.

Technical functionality

The system works with machine learning-based holdout tests. Two statistical methods are used:

Pre-post analysis (difference-in-differences): This technique compares changes in conversion behavior before and after the test phase. By analyzing test and control groups, Meta can take into account external factors that influence both target groups, thereby isolating the actual impact of the ads.

Baseline Estimation (Counterfactual Modeling): Meta creates a model that predicts what your sales would have been without media exposure (the "baseline"). Actual sales are compared to this baseline, and the difference provides a more accurate view of the real impact of your ads.

What sets Meta's incremental attribution apart from traditional lift or brand studies is that the results are anchored in your first-party transaction data, not just platform metrics.

Activation and availability

Activation takes place directly in Meta Ads Manager via the attribution settings. The feature is available for the following campaign objectives: Conversions, Product Catalog Sales, and Sales (purchase-optimized).

You can view incremental conversions retroactively from April 1, 2025, by adjusting your reporting columns, even if the setting was not yet available at that time.

Google Ads Conversion Lift

With Conversion Lift, Google offers a comparable tool for measuring incrementality.

Conversion Lift is an incrementality tool that helps you measure the number of purchases, website visits, and other conversions that result directly from people seeing your ads.

Key developments in 2025

Google has lowered the minimum budget for incrementality testing from around $100,000 to $5,000. This should make it much easier for smaller advertisers to measure what actually works.

This development utilizes Bayesian methodology, which is based on "prior assumptions," to deliver high-quality insights with significantly lower data requirements.

Google has also improved how these tests work, with new statistical models that make results up to 50% more conclusive.

Available test formats

Google offers several testing approaches within its platform. Conversion lift studies can run at the user level, whereby Google randomly prevents certain users from seeing your ads, or at the geo level, whereby entire regions serve as control groups.

The tests are available for Search, Display, YouTube, Shopping, Performance Max, and Demand Gen.

Practical insights

When which campaigns benefit from incremental measurement

Not every campaign is equally suited to incremental attribution.

Acquisition campaigns:

Maximum added value

Prospecting priority: Growth through prospecting campaigns brings in new customers and is likely to generate higher incremental value.

When acquiring new customers, causal measurement shows precisely which campaigns actually trigger additional purchases and which merely accompany people who are already ready to buy.

Retargeting:

Limited usefulness

Reduced retargeting dependency: Lower retargeting impact, as retargeting often involves users who would buy anyway.

Retargeting measures may appear less effective under this model, as it emphasizes conversions that would have happened without the ad, potentially underestimating the role of retargeting.

For retargeting campaigns, standard attribution with all touchpoints often provides more realistic insights. Its purpose is to complete purchase processes that have already been initiated, not to create new purchasing impulses.

minimum requirements

Incremental attribution requires sufficient amounts of data. For Meta: approximately 50 conversions per week or more. For smaller campaigns, the tests do not provide statistically reliable findings.

Measurement quality depends on the tracking implementation. Server-side tracking via the Conversions API enables more accurate measurements over longer periods than browser-based cookie solutions.

New KPIs for measuring success

Incremental attribution gives rise to new, more meaningful metrics:

Cost per incremental conversion: The actual cost per additional purchase generated. Not per assigned purchase.

Incremental ROAS (iROAS): To calculate the incremental return on ad spend for a channel, divide your newly discovered incremental revenue by your campaign's media spend. Incremental ROAS can serve as a key metric for budget decisions.

Incremental lift: The percentage increase in conversions due to advertising exposure compared to the control group.

Evaluation of results

The proportion of incremental conversions directly shows the contribution to overall success:

- Below 20 percent: Critical review of channel use required

- 20 to 40 percent: Solid foundation with potential for optimization

- Over 40 percent: Budget increase makes sense with profitable cost per order

- Less than 10 percent: Budget should be allocated to other channels

Impact on campaign evaluation

This will affect ROAS and CPA metrics, as they may have been inflated previously by a larger attribution network. Incremental attribution will now more accurately reflect the actual value of your ads and their contribution to total revenue.

Running campaigns with incremental and traditional models in parallel can help you understand how performance shifts and where meta may have been overvalued previously. Ultimately, it is always advisable to link the choice of attribution model to the expected amount of data.

Limitations and critical considerations

Despite its value, incremental measurement has limitations that should be taken into account when interpreting the results.

Platform self-interest

Like any platform-specific attribution tool, there is a potential risk that Meta overestimates its contribution to conversions. If marketers rely solely on this model, they may allocate more budget to Meta ads at the expense of other high-performing channels.

A comparison from practice: We cross-checked the same Meta accounts with GA4, and the picture is not so rosy. GA4, which is typically more conservative in attribution, showed that only 67% of conversions were incremental. This means that 33% of conversions would have happened regardless of whether we ran ads on Meta or not.

Don't blindly trust Meta's figures. Any platform that reports on its own incrementality always deserves critical scrutiny. Use this setting as a directional signal, not as absolute truth. Consider it as input, not as the answer.

No cross-channel view

Unlike broader attribution methods such as multi-touch attribution (MTA) or media mix modeling (MMM), Meta's model does not take into account interactions that take place across different platforms. This means that marketers may need to supplement their analysis with additional measurement tools.

The main advantage of incrementality testing compared to on-platform studies is that it measures the net effect of a meta program on sales, including any interactive effects that meta may have with other channels. Example: In the absence of Facebook Prospecting, your affiliate program may be less productive.

Practical challenges

Geo-based testing requires pausing spending in certain markets, which can cause short-term revenue losses. Time-based holdouts (pausing campaigns for weeks/months) can slow momentum and impact long-term brand growth. Audience holdouts limit reach. By not showing ads to a portion of your target audience, you could miss out on valuable conversions.

Most marketers cannot afford to pause ads or markets for weeks or months. Incremental testing often creates more problems than insights.

Long-term effects are not recorded

Incremental tests measure whether an ad drives immediate conversions, but they don't take into account long-term marketing effects such as: Brand awareness ROI. If your ads increase searches for your brand, how do you measure that impact? Word-of-mouth and referrals. If someone sees your ad but later buys based on a friend's recommendation, does the test count that? Lifetime value (LTV). Incrementality tests rarely measure repeat purchases or long-term customer engagement.

Difference from conversion lift studies

Meta has been offering manual conversion lift studies for years. The new incremental attribution differs in key respects:

Conversion Lift Studies:

- Manual setup with a defined test period (typically 3-4 weeks)

- Complex to implement

- Requires a dedicated budget for the test phase

- Provides specific insights for specific questions

- Ideal for strategic policy issues

Incremental attribution:

- Automated and continuously active

- Based on historical data from Meta's Lift Studies

- No separate test phase required

- Provides continuous insights in reporting

- Ideal for ongoing campaign optimization

Conversion lift measurement is the best way to understand how much 'strategy A' influenced a particular outcome vs. 'strategy B'. A/B testing is still a viable option if both strategies reach very similar target groups with the same level of baseline intent.

Validation and best practices

Double-check results with third-party tools

Cross-check with independent tools. Platforms such as GA4, Triple Whale, or your own post-purchase surveys are essential for balancing the narrative.

While Meta's model offers valuable insights, many advertisers find it beneficial to validate their results with third-party measurement tools to gain a holistic understanding of their marketing performance.

Step-by-step implementation

Select a campaign and KPI: For example, a Facebook campaign targeting add-to-cart conversions. Formulate a hypothesis: "This campaign will increase conversions by at least 10% above baseline." Set up control and test groups: Use a Platform Lift Test or create your own random or geo holdout. Run the test for a complete conversion cycle: Avoid overlapping changes (such as price updates or promotions).

Continuous calibration

Implementation is not a one-time project. Campaigns should be evaluated regularly and attribution settings adjusted to changing market conditions.

Benchmarks and year-on-year comparisons are based on different measurement methods after the changeover and are no longer directly comparable. Fewer measured conversions mean more accurate data. Not worse results.

Conclusion: Causal measurement as the new standard

Incremental tests have become the industry gold standard for understanding the true impact of advertising in a privacy-friendly way.

The key finding: Standard attribution systematically overestimates the impact of advertising. It measures correlations, not causality. Incremental measurement closes this gap through controlled experiments that show which conversions were actually caused by advertising.

The most important points:

- Causality instead of correlation: Incremental tests are the only way to measure true advertising impact

- Cross-platform relevance: Meta, Google, and other platforms now offer integrated solutions.

- Do not view in isolation: Triangulation of incrementality, MMM, and attribution provides the most complete picture.

- Critical validation: Platform-specific measurements deserve independent cross-checking.

- Think long term: Short-term lift tests do not capture all marketing effects.

Nevertheless, this is a step in the right direction. The fact that Meta recognizes incrementality at all is a sign of where attribution is heading.

We look forward to your inquiry

Book a free initial consultation with Max-Raphael Feibel now, or contact us by email, phone, or LinkedIn.